The Java 7 example leverages the Arrays class's asList() method to create an Iterable interface to the String, returned by the String's split() method. The call() method is passed the input String and returns an Iterable reference to the results. In the Java 7 example, we create an anonymous inner class of type FlatMapFunction and override its call() method. The flatMap() method expects a function that accepts a String and returns an Iterable interface to a collection of Strings. The flatMap() transformation in Listing 2 returns an RDD that contains one element for each word, split by a space character.

filter() returns an RDD that contains only those elements that match the specified filter criteria.Spark then flattens the iterators of return values into one large result. But rather than returning a single element it returns an iterator with the return values. flatMap() is similar to a map() in that it applies a function individually to each element in the RDD.map() applies a function to each element in the RDD and returns an RDD of the result.Transformations come in many flavors, but the most common are as follows: In this case, the transformation we want to first apply to the RDD is the flat map transformation. You'll find that we perform operations on RDDs, in the form of Spark transformations, and ultimately we leverage Spark actions to translate an RDD into our desired result set.

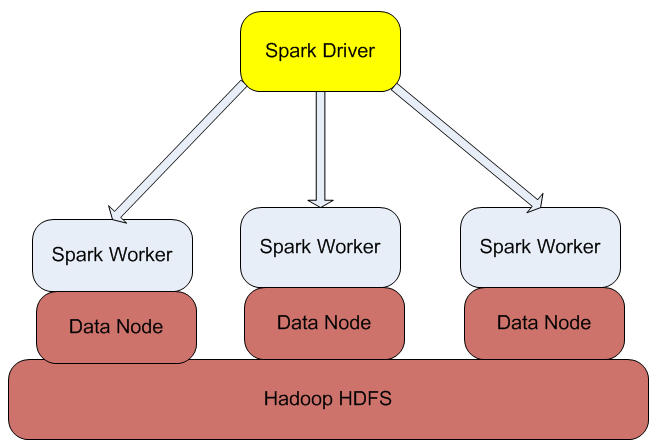

An RDD is Spark's core data abstraction and represents a distributed collection of elements. This method reads the file from either the local file system or from a Hadoop Distributed File System (HDFS) and returns a resilient distributed dataset (RDD) of Strings. The first step is to leverage the JavaSparkContext's textFile() to load our input from the specified file.

Java 7 and earlier: transform the collection of words into pairs (word and 1) Load the input data, which is a text file read from the command line JavaSparkContext sc = new JavaSparkContext(conf) Create a Java version of the Spark Context from the configuration SparkConf conf = new SparkConf().setMaster("local").setAppName("Work Count App") Define a configuration to use to interact with Spark Public static void wordCountJava7( String filename ) * Sample Spark application that counts the words in a text file

#Big data analysis with apache spark how to#

Note that it shows how to write the Spark code in both Java 7 and Java 8. With that out of the way, Listing 2 shows the source code for the WordCount application. Having Maven copy all dependencies to the target/lib directory enables the executable JAR file to run.Defining a main class that references the WordCount class instructs Maven to create an executable JAR for WordCount.Setting the compilation level to Java 8 enables lambda support.Note the three plugins I added to the build directive: